|

A few final words to end another semester, and wow that means I'm half done! Even better, that means I still have half to go! I've thoroughly enjoyed and and begun to see how these courses build off each other, and all carry a practical association back to my own context. Through this blog activity, I found a host of resources that have helped further my thoughts on many current learning needs, and have fostered many thoughts of change. I'm a paramedic by training, and am finishing my second year of full-time teaching, after about five years of part-time teaching, and with a change in program leadership, find myself in a position where evidence based change is encouraged. There really hadn't been any significant changes in the past decade, the former coordinator had largely built the program, arguably one of if not the most successful in the province, and based on those results it had always worked, so changes were not seen as a good thing. As student cohorts changed, and educational views changed, all current faculty recognize that we are not here to teach the content and administer the tests, allowing students to pass or fail on their own, but we're here to teach the students, and provide them with both the best education and the best opportunity to succeed that we can.

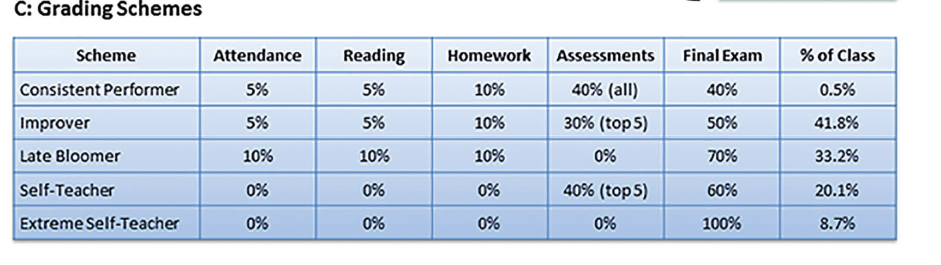

Through these reflections I've come up with many ideas that are exciting to think about, and that my program colleagues are intrigued by. Classroom delivery will be a work in progress for a while, but my lecture based theory classes are going to change to better incorporate full participation, and my method of stand-alone high-stakes evaluation will be changing. For my thoughts on various multiple choice scoring systems, although very intriguing, I still fear that it may produce an atmosphere of gambling, of spending too much time on debate between options. I am however encouraged by the notion of utilizing an evaluation system that scores the best possible outcome, weighting tests in 3-5 different schemes as discussed in a previous post. The only difficulty will be my school's eGrades used for tracking marks, as it relies on a given outline, however I may just change this to a final grade value, and track all marks separately, it will just involve clear structure within the course outline. I'll take this chance to thank everyone for sharing your views and comments throughout the course, I genuinely enjoyed both courses I took this semester (801 was the other), and move on to some of the Classroom Specialist courses, with an elective in Assessment, as clearly that seems to have become a large component of my thoughts throughout this blog. Thanks for reading, and good luck going forward!

0 Comments

I've focused a lot during this blog on the current evaluation and teaching methods used within my program, and what changes might be made to improve upon these. There is another component in discussion currently, which is adjusting our entry requirements. Currently we require a collection of college stream high-school courses, typical of any program, and upon a conditional offer of acceptance, we require these applicants to attend a June/July information session where we ensure these students understand the rigors of our program, including the fitness component. To do this, we put the students through the Beep Test and Obstacle Run; we demonstrate but avoid doing the lifting tests as it requires certain safety apparel and lifting techniques taught once within the program.

Our information session fitness component is participation only, we do not hold any requirements, however there are other colleges that do. To demonstrate the relevance of this, last year we lost fifteen students to fitness in first semester. This is the high end of a normal range. We view this number as unacceptable, particularly since we let the students know they need to start training in June, for a test that occurs in December. I'll reiterate that the levels we ask for barely meet what most sources would call below average, and even unhealthy. Our proposal is that we implement a fitness requirement. Based upon Canadian Military evidence, there is research to clearly show the progression one can make and improve; for example, if you can only run to level 2 in June, there is evidence that this applicant will not be able to obtain a passing level of 5 by December. This applicant would then be denied admission, sent home with their results and a worksheet on how to improve, should they desire. Judging from last year, this would have denied six applicants entry into our program. These six were ultimately unsuccessful, and we could have used these seats in our limited enrollment program for students with a higher chance of success. We make it clear in June of the hard work necessary and the statistics of success, however students and parents always believe they'll be able to do it. I do believe statistics can be beat, but it requires such a drastic lifestyle change for these applicants that I have yet to see it happen. Our initial proposal to administration was met with hesitation, with thoughts of human rights being questioned. I admit I need to investigate this further, and within the context of my college policies, however is it any better to set students up for failure? Perhaps after another couple of years of tracking these initial results with eventual class grades we will have sufficient internal evidence to approach this again. Our program's biggest issue from an administrative point of view is retention, and we believe this is a valid method to improve upon this - we implemented the information sessions to attempt to improve retention and inform applicants about the fitness requirements, however it does not seem to dissuade anyone. Many teachers I know take attendance, mostly as a fallback when students are unsuccessful, as only rare courses in my school give marks for attendance. This fallback typically demonstrates a student's lack of class attendance, and thus provides additional evidence beyond an unsuccessful grade.

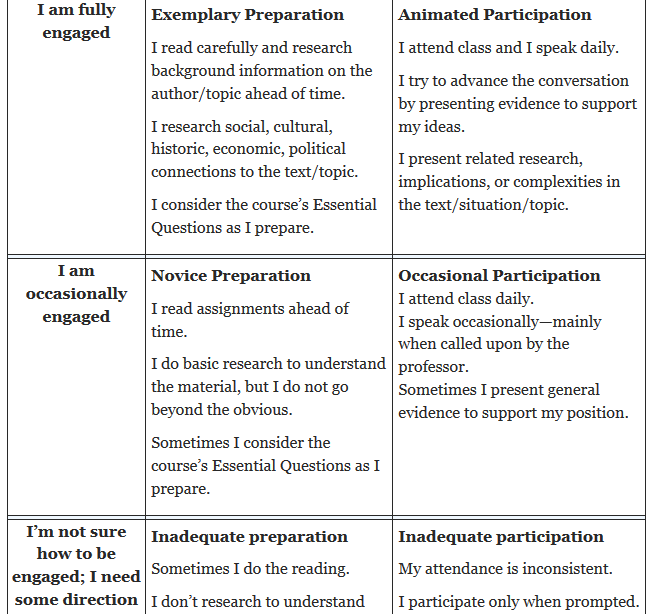

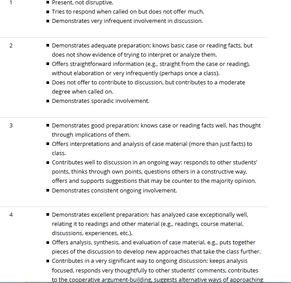

I do happen to teach one such rare class where attendance is still given a mark - Physical Fitness and Wellness. The major part of this course is physical preparation for their fitness and lifting evaluations and job competitions. I started to wonder this semester though, why am I giving away free marks to those that can roll out of bed and arrive in the gym on time, to then participate with a complete lack of effort, barely going through the motions. It's the participation that matters, not the attendance. So this thought rolled around a bit more in my brain, would this not apply to my theory classes too? Currently, there are no attendance or participation marks, but should there be? If I want students to care more than just about a number at the end of the course, should I not demonstrate what I preach by praising active participation and preparation? I want the focus to be on the material and its application, and promoting the metacognitive skills to become better learners, but a final grade based completely on tests seems contrary to this philosophy. As the teacher I have the freedom and control to change my course outline and evaluation scheme, and I think change is in the wind. I enter my third year of full-time teaching next fall, and though I have made minor changes in both content and evaluation, it's time to stop doing what was done previously, just because it was already in place and worked. Below is an example of a rubric I found that is an example of how one might evaluate participation, and this can be done by both the teacher, and the student. Some comments on the rubric stated that it was too complex and unnecessary to track participation this way, just put a plus or minus next to a students name each day. I however like this rubric, or something based off of this concept, as the other important aspect is the self-evaluation and reflection, promoting that metacognition and self-regulated learning I want my students to develop. http://www.facultyfocus.com/articles/effective-teaching-strategies/participation-points-making-student-engagement-visible/ I write this post following a recent conversation with an EMS service, who questioned what our paramedic program does to instill our graduates with a healthy physical fitness outlook. "Sure you prepare them for their fitness evaluations to get hired, but what do you do, if anything, to ensure they continue with their fitness once they graduate?"

The Background: Our students take three semesters of fitness courses, the last being an online and self directed course. The ultimate goal is to help the students train to pass the physical evaluations that must be passed to obtain employment. This consists of a beep test, an obstacle course, and two strenuous patient extrication/lifting tests. After discussing how our students progress to the self-directed class, I then posed what I think are bigger questions. If services value this attitude, and require such testing prior to hiring, then why do they not do annual tests to ensure medics are still able to this? Cognitive abilities are tested yearly, why not fitness? We've all seen the large proportion of out of shape medics out there, what incentive do they have when other life pressures start to take their time? Having a staff of physically fit paramedics certainly reduces injury time, and is well established as one of the most effective coping mechanisms for stress, yet services offer very little if any incentive here. Would the savings in decreased injury and stress leave not be enough to offer greatly reduced or even free gym memberships? Paramedics are encouraged to enter various athletic events, however there is no support from the employer, even at events where medics are entering under their service name. At the extreme, those medics who actively pursue great athletic achievements on a national and international scale are only seen as those with limited availability to work, and have that time held against them. I have been happy to hear these same questions from some of my students. I applauded their critical thought and scrutiny, for their questions are legitimate. "Why do we have to do all this, when it's obvious from looking at working paramedics that fitness isn't as big a deal once you're hired?" There is certainly validity in ensuring a base-line level of fitness to enter the workforce, you do need to be able to lift people, work around difficult terrain and accident scenes, but employers need to do more to continue this. They provide continuing medical education, why not continuing fitness opportunities? All I can do is keep pursuing the development of a fitness curriculum that inspires students to continue physical activity for life, they don't need to be "athletes", I just want them to have the skills to be healthy and well. And who knows, maybe once enough get out there with these attitude, and these questions, employer attitudes may change. As I was searching for more evidence to support the team teaching concept I discussed in my last post, I came across this, Team-Based Learning. In this instruction model, a focus is put on pre-class preparation and group work. Fundamentally, students are assigned pre-class material, upon arrival in class students are tested individually on this material, then move into their assigned groups where the test is taken again as a group, following these assessments groups then move to working together on problems and discussion to further the learning. This would be an interesting shift in how my theory class operates. I picked up teaching my theory classes in the traditional lecture style that has always been used, which utilizes three tests worth 20% and one final worth 40%. This team-based approach increases the accountability of the students to keep up with their daily work. Our classes are extremely content heavy and progress rapidly, so we rarely have attendance issues, however this increased emphasis on pre-class preparation would add to the flow of the class, as well as help students develop better learning skills. A portion of the final grade then comes from the individual and team taken in-class tests, which would take some of the stress off of the current high-stakes exams. Paramedicine is a high-stakes profession, but I don't believe education must follow this, we should be preparing them for it, not expecting it from them. The focus on team test taking would foster discussion, and the ability to use resources to verify information. There are often discussions they'll face in the workplace about how best to treat a patient, and this could provide valuable experience in listening to others, critically analyzing options, and justifying decisions. I will certainly consider a lesson plan like this, not just for change, but as there are relevant skills fostered within this method. With a lot going on, I may incorporate it into one class, or even just a unit or two within one class to see how effective I find it, and how the students do with it. http://www.teambasedlearning.org/ One of my colleagues presented an interesting idea a few weeks ago, that came back to discussion today. His idea: that we consider team teaching. The premise is that among the three of us that are full-time faculty, we each teach similar content at different levels and scopes of practice throughout the three years of our program, and that we consider each teaching our favourite units throughout the program at each level. For example, Brad teaches the advanced care theory class in third year, I teach theory in the second year, however we both cover similar topics. In this method I might teach toxicology to my second year class, while Brad is teaching obstetrics to his third year class, then we would switch; I would take my toxicology unit with additions to the third year class, and Brad would move into the second year classroom.

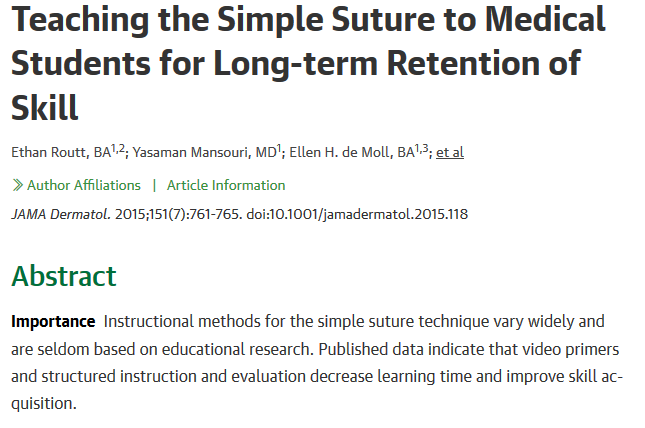

Advantages would include each of us having more focused prep time, and we would be that much more up to date and read on our individual modules. It may also prove more interesting for the students, as each teacher brings their own methods and enthusiasm. Disadvantages would be the obvious difficulty in coordinating these modules and our teaching time, as we all have busy semesters, and teach our content in an organized flow that may have to be altered. There would also be less familiarity with the students in each class, and therefore less emphasis on understanding students on an individual basis. I am intrigued by the idea, however at this time the task of coordinating teaching efforts seems very large in light of the workload we currently have, as we are in a program accreditation year. Additionally, as I am just finishing my second year of full-time teaching, I would be happy to have at least a third year to continue working on my current courses and teaching methods. Our dean has no issue with the sharing of teaching assignments like this, so if it is something we decide to try we have the support to do so. My fellow program faculty are also very excellent to work with, and we will only go ahead once all three of us feel ready or have desire to do so. So at this point, I personally would like to hold off for another year. Has anyone else taught in a similar context as this? I'd be keen to hear some first hand thoughts about the experience! This article I recently came across brought up an interesting thought, why do I teach medical skills in the manner I do? Typically, I teach these skills in the manner that I was taught, and continue to be educated through continuing medical education (CME) to maintain my working paramedic certification. As this article points out, most of these skills are taught in a manner that is not based on any evidence or research, we simply do what's always been done. We usually present the theory behind the intervention (as pre-reading for more advanced skills), then demonstrate in class, discuss evaluation, and break into smaller groups for simulated practice. Based on this recent research, I'm not doing a bad job, but improvements could be made. Ideally, pre-reading should be given, along with an emphasis on a pre-class instructional video, including access to the evaluative criteria that will be used. Then in class, review the material, demonstrate, followed by simulated practice. Students should then perform ten repetitions of the skill within the next ten days. Skill competence levels were retained much longer and with better evaluation scores when all these components were in place. This is an important process to consider, for as the article also states, often students learn medical interventions and skills months before actual live practice, and our instruction must be evidence based to ensure we are utilizing the most effective methods. It is also mentioned that when possible, spaced reinforcement promotes further success than a single long duration lesson and practice session. For my context, to use IV's as an example, it would be better to teach the basic initiation on a perfect patient presentation, and in subsequent shorter sessions introduce variations in technique for difficult patient populations, over attempting to teach this all at once. Overall this will be a simple and relatively small change in my teaching practice, but one that will now be evidence based and promote better long term competence, which is important to my personal philosophy. Routt, E., Mansouri, Y., de Moll, E. H., Bernstein, D. M., Bernardo, S. G., & Levitt, J. (2015). Teaching the simple suture to medical students for long-term retention of skill. JAMA Dermatology, 151(7), 761-765. doi:10.1001/jamadermatol.2015.118 After another meeting today, the idea of Ontario's Primary level paramedic programs progressing from a two to three year course is likely becoming an official proposal to the Ministry. Paramedicine, like education, is an ever evolving and growing profession. A job that was once driving an ambulance is becoming ever more complex, as yearly new medications and emergency procedures are added to the primary level of practice. The catch is that the program duration has not changed in decades. Even comparing to ten years ago, the scope of practice has advanced so much, that primary care medics are practicing at near the level advanced care medics were just a few years ago. Obviously with this, the amount of increased training and education added to the curriculum has increased, yet where does this time come from? Well, it hasn't. We're just asked to teach more. Our students are already in credit overload as it is, and now carry arguably the most demanding course load and steepest learning curve in the college. Is this responsible for our 60% attrition rate? Partially. Mostly this is from a complete lack of a robust entry process, but that's a whole other post / blog in itself.

This made me think of the teaching of the history of education, and how important it was we determined that teachers should know where they came from, in best order to understand where they're at, and where they may go. Likewise, I think it is equally important to continue to impart to paramedic students the history of their profession, as it also helps develop the skills they'll need to adapt to the changing industry and standards. So with all these factors at play, the college heads are proposing the paramedic program become a three year advanced diploma. This is also aligned with the terminology change that paramedics are not to be considered technicians, but clinicians - a term also that defines other three year medical programs. As an educator, this move makes sense. As a college it makes sense. It should help reduce the acute stress and workload, and retain more students. And it should also help the employers as they will be getting a more mature graduate who has had another year of personal growth via more clinical hours, more residency hours, and an overall year of life, which can mean so very much for an eighteen year old. So once the official proposal is submitted, we will wait on the Ministry's decision, which currently depending on who you know, and who you ask, is about a 50/50 split on thoughts of approval. Could there be an alternative? Services could implement a much more rigorous new employee training and mentorship program, solidifying their education and promoting a safe environment of trust and development. Unfortunately, as good as that sounds, that is not our current industry, nor do employers seem to want to spend any time, money or resources implementing this, they simply want employees to show up job ready on day one, fed to the wolves, sink or swim. A harsh reality, and one that would require a culture shift to successfully accomplish. Within the program I teach, our practical lab and theory courses share content yet are independently evaluated. Some colleges have elected to put them together, more typical of anatomy or science classes that carry attached labs, but our school has always kept them separate.

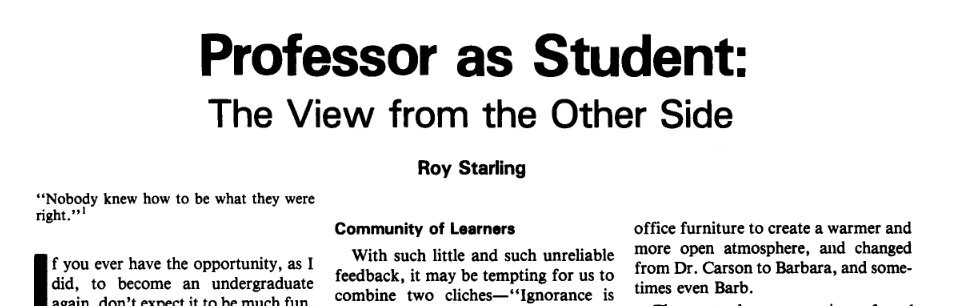

As I think about going forward and envision changes I'd like to implement, the concepts of collaborative inquiry and increased student participation keep coming up, even for theory classrooms. These classes for the past many decades have been your typical lecture based room, sure the teachers use constant real-world examples to ensure relevance, but the student role is passive. Evaluation is done strictly by examinations. The argument for this has been that we ensure the students have a basic grasp of fundamental concepts, then later that week it is applied in lab and practiced. The students that typically excel here are those that come from a university background, those that have become "professional students", and are typically those that do well in a lecture hall and have the ability to synthesize spoken word into practice. This student is the minority in a college classroom, where more and more our cohorts are coming straight from college-stream high-school. Would this type of student, and all students really, not benefit from a more engaging classroom setting? Unfortunately, appealing to students to come better prepared to class to actively discuss our way through new concepts and apply them to case studies isn't enough, inevitably many are not motivated by this intrinsic appeal. They are often results driven, so how to externally motivate these reluctant numbers? I've been looking at a few class participation rubrics that may help remedy this. It is a quick way to scale quality of participation, and would provide the motivation to come to class ready to actively engage. The argument against this would be the question of watering down the course with such participation marks, but ultimately I think this does the opposite, strengthening not only the knowledge of content, but also imparting valuable good learning habits. The following link is to one such rubric, a simple 0-4 scale, which after an adjustment period I'm sure I could reflect after each class and apply to my 25-30 students. http://cte.virginia.edu/resources/grading-class-participation-2/ I found an interesting article by Starling, that describes his semester as returning to the classroom as a student. In his college setting, for professional development, one teacher each semester is selected to suspend their teaching and enroll in a full-time semester as a student. The premise is to have the teacher view other classrooms and teachers from the student perspective, instead of just attending as a peer reviewer. The teacher is to assume the role of a "master-learner" and assist newer students, in addition to doing all assignments and tests themselves.

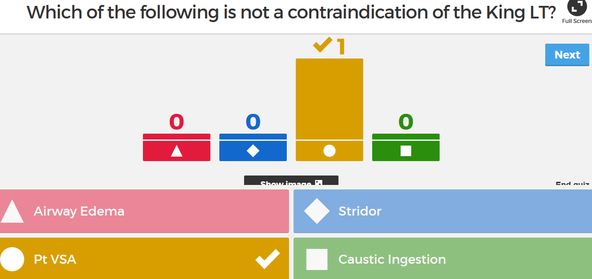

One of the driving forces is to increase the amount of self-reflection, as so often our feedback is derived from anonymous student surveys and very rare and brief supervisor visits. Some of Starling's take-aways include focusing the first day on content and assignment relevance, employing increased journal activity and group discussion over exams and lectures, and having increased empathy for student concerns and questions. I don't foresee this being an activity my school would endorse, primarily because many of our programs have a small faculty, such as three full-time teachers in my program, so the resources to cover the classes simply aren't there. However, I could envision a few hours set aside for teachers to visit a handful of classes, it would just take a shift to move away from being there to critique, to being there to absorb and reflect. In another course during a group discussion, colleagues who teach in Korea and Vietnam discussed how this type of PD is already being done, where teacher visits to other classrooms are the norm. Here in North America, this would most often be viewed as an intrusion, we've got work to do! Starling, R. (1990). The impact of alcoholism: The writer, the story, the student. College Teaching, 38(3), 88-92. doi:10.1080/87567555.1990.10532200 I decided to finally check out a tool that our college teaching development team has been raving about all year, Kahoot. As a staff, we had played a short ten question game of it during back to school meetings in the fall, but there was so much going on little attention was really given to it. First of all, part of its intrigue are the two words I used to describe my experience, play and game.

So what is Kahoot? I discovered it's a free online quiz program. So I just now made an account, and looked at the developing side of it. It's very intuitive, simple, and quick to use. You write a question, link video or images if you choose, and write your choices. When you're ready to play, on a central device from the developing site, select the quiz, the participants then google "kahoot on their smartphone, tablet, laptop etc., go to the Kahoot players website which immediately asks for a pin number, which is shown on the central screen, and then the quiz begins. You can select music, question timers, question scoring which also scores for speed, and displays a leader board. I'm not necessarily a fan of the latter, but the options are there. You can also select your questions to be randomized, as well as the answers, and statistics display the number of correct responses to each question. Essentially this is like an evolved TurningPoint. Reviewing this way is certainly more engaging than the traditional methods, and reduces student anxiety as they have the ability to answer anonymously if the points option is not selected. This could prove to be a good group assignment, having a small group begin each class reviewing previous material. The drawbacks are the 1,000 character question limit, as well as limited space for the answers, so it seems to be good for factual type questions only, and does not seem to be able to incorporate multiple multiples - a standard question type of paramedic testing. I often like to quiz with short answers to truly ascertain comprehension and recall, not just recognition, but this will certainly be an interactive tool that will add variety to my classroom. The developer site is found at: getkahoot.com The students would then visit: https://kahoot.it/#/ Check it out, super easy. In replying to a philosophical article response, I came across the question of those reluctant to practice reflective teaching, and refusing to change even in the face of evidence of a better method.

It made me think of two resources I've used in PME 801 that I'm also currently taking. In the article depicted above (and linked below), DuFour describes the traditional teacher position of working in isolation. A teacher's professional practice was kept under a veil. The movement today is towards a collaborative model, but how do we encourage this? Donohoo and Velasco contend that collaboration should be voluntary to promote the best engagement from a sense of ownership, that mandating this would result in resistance. DuFour however argues the opposite. The time for polite encouraging is over, it is a professional and ethical responsibility of teachers to engage in collaboration, ever seeking methods to better themselves for the betterment of their students. Indeed, Donohoo and Velasco cite many sources that state student benefit gains is quadrupled when teachers collaborate, and use evidence from this to adapt their practice. In this regard, DuFour continues his argument, stating that evidence trumps precedent, again following that such change is the moral and ethical duty of the teacher. Of course there will be growing pain as collaboration continues to develop, but that's a different problem, one that will be a happy result as it means collaboration is at least beginning. So I pose the question, should collaboration be considered a voluntary endeavor as Donohoo and Velasco believe, and as is endorse by Learning Forward Ontario, or is it time to take a stronger stand with DuFour and demand change? http://www.jstor.org.proxy.queensu.ca/stable/pdf/27922512.pdf Donahoo, J. and Velasco, M. (2016). The Transformative Power of Collaborative Inquiry: Realizing Change in Schools and Classrooms, Thousand Oaks, CA: Corwin. In my department's recent PD meeting, we were given a quick introduction to the term lateral violence. This was initially just part of the introduction of a new Human Resources employee, whose past work focused on working in US hospitals to identify and decrease this. However, what began as an introduction quickly became thirty minutes of meeting digression.

Essentially, lateral violence is all the traditional indirect and sometimes direct aggression or violence directed towards one's peers. Examples include non-verbal innuendo (eyebrow raising), undermining activities, backstabbing, broken confidences, etc. There are only three full-time faculty in my program, and about eight part-time. We all work very closely, and the three of us full-time faculty share office space, and collaborate and work together in detail every single day. Another program within our department that is much larger immediately began to show obvious disdain between smaller groups of their whole. Even as we discussed these concepts, the display of lateral violence within the room was immediate and obvious. It was hard, and sadly entertaining, to see such behavior within my colleagues and between peers that were supposed to be on the same team. The majority of them agreed that we should as an entire school engage in lateral violence training, which would include we carry pocket cards with pre-written replies whenever encountered with such acts. I chose to bring this up, because it seems to relate to what I've been reading elsewhere in this PME 811 course, regarding noticing behavior and working to change it. A large part of that however, is noticing behavior you wish to change for the betterment of others, and perhaps those involved here don't wish to? It sounds like it has become to an extent that it is affecting teacher attendance and life outside of the workplace. Perhaps this group needs to identify a project to work on with a skilled facilitator as suggested be Bradley Ermeling and LearningForward. Perhaps this behavior requires what Noddings termed confirmation, where instead of criticizing their behavior, they are pointed towards a better course of action - similar to the lateral violence approach but with less officialdom. Has anyone else been introduced to this type of terminology or training? Perhaps I am naive to think that as professionals we should be able to put aside differences and work together, after all we should be modelling the behavior we aspire of our students. For more information on Lateral Violence: https://www.arnnl.ca/sites/default/files/Civility%20and%20Nursing%20Practice.pdf Noddings, N. (2010). Moral education in an age of globalization. Educational Philosophy and Theory, 42(4), 390–396. doi: 10.1111/j.1469-5812.2008.00487.x https://learningforward.org/publications/learning-principal/learning-principal-blog/learning-principal/2012/10/24/the-learning-principal-fall-2012-vol.-8-no.1 Innovative teaching methods vs the traditional university lecture

The above link is a BBC write-up comparing traditional lecture based classrooms and newer innovative styles. I found value in this short article as it painted a comparison with benefits to each side, not just an argument for innovation. It describes that although traditional lectures carry the connotation of being dull, this is more properly accredited to the teacher, not the method. We've all had lecturers we've enjoyed, and those that were monotone and dull. An enthusiastic teacher can bring the lecture hall alive, clarifying complex topics, and providing experience based teaching. It also describes how in a technology filled life, some students prefer this method away from devices. In the end, it is suggested that the traditional lecture should at least incorporate some degree of novelty and technology to invigorate the senses and improve student engagement. It suggests the use of videos, although I've been incorporating videos, demonstrations, small case studies with class involvement for years, and always considered this still to be traditional lecturing. Collaboration it is noted is the next step, and it also mentions classroom architecture as a roadblock. Very true. I am often assigned the classic amphitheater style lecture hall, with small swivel up desktops. Clearly this classroom did not have student collaboration in mind, and indeed makes me think I should work to avoid this room! Many other high-tech rooms have immovable desks with hardwired plug-ins on each, which although an innovative approach to encourage technology, does nothing to encourage collaboration - unless using technological platforms, but I would argue there is something to be said for in-person interaction out from behind a computer screen. The best group work I've done was teaching in a regular classroom with regular moveable desks, where instead of teaching pre-hospital documentation by lecture, student collaboration was used. The students were given sample paramedic calls, a documentation manual, and then taught themselves and each other in small groups, and then audited forms of another group with different call information to further their understanding. This reflection has made me consider requesting the traditional "low-key" classroom for more classes, where it will be easier to incorporate more diverse learning opportunities. Ideas are whirling... Thanks to the comments of Leah from my last post, I searched out (followed Leah's link) to a TedTalk by Martin Bush about different methods of evaluating multiple choice tests. I've requested the original journal article behind the premise of his talk, however the direct request can take a few days it seems, if even approved.

The TedTalk did a fantastic overview however. Essentially, you can use the same multiple choice test, but assign different values and schemes to the scoring. The concept here being to eliminate the guess-work, and improve the credibility of the test as an effective evaluation of knowledge. https://youtu.be/ACB2B2EdiXs Martin describes a few different methods I'll try to simplify here: Negative Marking: 3 marks for a correct response, -1 for incorrect a) wrong b) wrong X. Student would receive -1 for this question. c) correct d) wrong The theory here is that for guessing, there is now a 1/4 chance of scoring 3 marks, and 3/4 chanc of scoring -1, so these should equalize. This can however ultimately lower test averages, and nobody likes being graded in a negative environment. That said, my profession is very much like this, do well and save a life get 3 points, do poorly and not save the life -1. Subset Selection: You may select more than one answer. a) wrong b) wrong X -1 c) correct X +3 Student would receive 2 marks d) wrong a) wrong b) wrong X -1 Student would receive 1 mark c) correct X +3 d) wrong X -1 This marking scheme begins to demonstrate knowledge, particularly in the common case where the student is able to eliminate two options and is left with the classic 50:50, well now they may select both answers and achieve 2 marks. A concern I have here, how much time will students spend debating over what they're willing to risk on each question? Should I go for the 3 marks, or play it safe with 2? Will this increase test anxiety? Subset Selection Without Negative Marking: a) wrong b) wrong X c) correct X Student would receive 1/2 mark d) wrong a) wrong b) wrong X c) correct X Student would receive 1/3 mark d) wrong X a) wrong b) wrong c) correct X Student would receive 1 mark d) wrong a) wrong b) wrong c) correct Student would receive 1/4 mark d) wrong I find this to be a highly positive test, where one could answer nothing and still achieve 25%! This final method is still being studied to determine its effective quality, however the Subset Selection was presented to statistically match the profile of what a good test is meant to show, that increasing knowledge would yield increasing test scores. My initial thoughts are this would be easy to implement with current tests, however the grading would be significantly more time consuming. Last year my school switched from the "scan-tron" to "grade-cam", both utilize the bubble-sheets, however now we can mark instantly with a smartphone app or classroom camera. This technology would not accommodate this type of grading and the selection of multiple different answer patterns, which would mean marking 70 multiple choice questions for 30 students, where each answer must be interpreted, not just mindlessly checked to a defined answer key, and we don't have TA's! I have an opportunity to implement this in a few weeks with a smaller class size, if the other group teachers agree - a multiple choice test written in our smaller lab sections. This would also give me a great insight compared to traditional scores, and doing so in an easily manageable group of students. I need to spend some time playing with the scoring variability to determine if I prefer the Subset Selection or Subset without Negative Marking. I can appreciate how these methods were shown to lower test averages already just playing with some numbers, where students will likely achieve many half marks, but I do like this concept of working to eliminate some of the guesswork and better demonstrate their thought process of ruling out options. I wonder however, does not answering a question with the most correct incorrect option also display this? They didn't pick the right option, but they picked the next best one. I'd love to hear any thoughts or other variations people have tried with this, and if there are opinions both from a student or teacher perspective. If a multiple choice test is being utilized, is this a better option than the traditional scoring?  I’ve been trying to put some further reflection into my testing methods in the upper year pathophysiology class I teach. As I have mentioned briefly, the course has traditionally been tested using high-stakes multiple choice tests. These tests have been designed to follow the structure of the provincial certifying exam written after graduation. My program offers the highest ranking on this provincial test for the past 13 years, 100% pass rate, and no other school is close to boasting that. I understand however, that just because this is the way we’ve always done it, this does not mean that it is the right way. In my last post I looked at an alternative grading scheme, that could even continue to utilize the current tests, but that would perhaps give students the best opportunity to do well. On one hand it doesn’t penalize a single poor performance if one achieves well elsewhere, on the other hand, in the field of paramedicine failing to do well on a single call may have drastic consequences, and hence we’ve always been okay with high-stakes testing. I’ve also heard of a multiple-choice scheme where the test is given in class, which is worth two-thirds, and then the test is taken home until next class for the students to write again using their notes and resources, worth the remaining third, in an attempt to deepen learning. Reviewing a multiple choice guide from the University of Texas, it gives a few tips to creating multiple choice tests. It was interesting to see how many of the “do’s” I don’t do. Why? For the same reason I’ve brought up previously, the provincial certification exam is written in a confusing method with often poorly written questions, so as a program we take it upon ourselves as a duty to prepare students for this exam, which will then allow them to find gainful employment and a fulfilling career. After all, is this not why they came to college in the first place? Within the Texas document, it does discuss some positives of multiple choice testing, which I have found highly beneficial. Of these, namely the quick feedback, and the ability to statistically analyze the data. It does warn however that learning can be lessened if the test is written poorly, as students will study for recognition, not recall. Additionally, poorly written tests will allow test-wise students to find answers throughout the test, which I do on purpose to teach students how to better write tests. It suggests to avoid tricks, minimize reading, and ensure there is just one correct answer. I incorporate small degrees of all of these, again to teach students how to write such tests, as not only will their provincial exam be like this, but the majority of their hiring-competition written tests as well. It is as though they are often written by those trying to prove how smart they are, not ascertain the knowledge of the applicants. Since I took this course over now two years ago, I’ve taken the degree of multiple choice down to 70% of each test, with 30% short-answer, to further emphasize a deeper learning and engagement with the material. I’m brainstorming with ideas to incorporate more case-based learning and inquiry through assignments, to supplement the traditional exam style of the class. My thoughts are this may increase engagement, give the students more ownership of their learning, improving material contact, while still administering the now lower-stakes multiple choice tests to ensure post-graduation readiness. As I’m in the reflection / brainstorming phase, I would greatly appreciate any feedback and experience with those under the pressures of standardized tests, who have been influenced to teach to a test, and from those that successfully made the leap away from the traditional. https://facultyinnovate.utexas.edu/teaching/check-learning/question-types/multiple-choice Currently within my program and individual courses, there is literally so much material crammed in that we utilize every minute of our class time, and often more. This is in addition to having an overloaded program, where students carry above what is considered a full-time compliment of courses. To this end, we often discuss assessment. We’ve undergone a radical and effective evaluative change in our practical lab components, however our theory classes have remained very traditional. The biggest driving factor of this is the provincial certification exam post-graduation, which is a six hour comprehensive multiple choice exam, written to be confusing, to distract with non-essential material, with page long questions, and a focus on multiple-multiples. I don’t agree with the format of this exam, but that’s for a different post. SO, that’s why we haven’t yet ventured towards other assessment strategies, we feel our students need the practice of this style of exam and questions. As a college program, our job is to help students acquire the skills needed to obtain employment and build a successful career and healthy life through their chosen profession, and part of this is ensuring they will obtain certification. Today I was reading an article from Faculty Focus, and then from within the comments there was the following shared resource: http://www.lifescied.org/content/16/1/ar2 This article shed some light on this dilemma for me. A way to continue to give students the style of questions they need to practice in a legitimate setting, and a novel way of evaluating to improve overall performance as well as impart successful generalizable strategies. The premise is provide students with weekly cumulative exams, provide immediate feedback, and encouragement to improve upon metacognitive strategies. This demonstrated increased student success in higher order questions, even when question content had not been tested before. To take this a step further, the study also shows how a grading scheme was used that incorporated five different weights to each assessment, to allow for students to improve with time, decline with time, or maintain performance. Marks were input to a spreadsheet, and whatever method identified the highest mark, that was the score utilized for that student. It helps students by:

Be Aware Of:

This is certainly an idea I’m going to let fester a bit and discuss with my colleagues.We currently have classes with infrequent and high-stakes exams, is this truly necessary?The biggest concern I initially hold is the class time this would utilize.Could this be offset by increased readings / self and group work assignments?I think the biggest benefit is the increase is student metacognitive and self-regulating skills. Today I read a recent article on Faculty Focus, Assignment Helps Students Assess Their Progress by Christina Moore, where she describes a simple yet highly effective way to not only check on the progress of your students, but more importantly, for the students to check in on themselves.

Following this outline, I will set up a simple Moodle assignment for my class to do, which will not be graded, but will be a mandatory assignment, where the following course material will not be available until this is complete. I am going to ask the students to submit a journal style reflection of their progress in the course thus far, if it meets their expectations, what their goals are for the remainder of the course, and how they intend to meet those goals. The assignment will also involve the student stating their current grades and attendance. I've been looking for ways to continuously improve the self-regulated skills of my students, and I believe this will be an easy task to employ which will yield great results. Students will employ self-reflection, and make an honest self-evaluation statement about where they stand in the course, taking ownership for their performance and attendance. Many students will likely just need to continue their current habits going forward, some will need to form new strategies, but all will be reflecting at an opportune time in the semester. This will also allow me to gauge the overall feel of the class as a whole, and offer personalized feedback, opening up dialogue that some students may otherwise not have had. It will further promote feedback undoubtedly about the course itself and perhaps my own teaching practices and material, which will also give me an opportunity to reflect, adapt, and carry on. Win-win. After reading Collaborative Inquiry; A Facilitator’s Guide, a publication by Learning Forward Ontario written by Jennifer Donohoo, I was intrigued to find out if there had been any follow-up to the suggested practice. The Guide describes a process by which schools may undertake collaborative inquiry as a means to improve student outcomes. Did schools actually implement this? If so did they have success? Who provided the facilitation, was it the principal, a teacher, someone brought in? The Guide brought forward many great concepts, however I was left with thoughts about participation and process outcomes, would educators take part in this? Is there time for this? I’ve been part of the beginning phases of a process like this, which quickly fizzled out, how do we generate a genuine inquiry?

To begin to answer some of these questions, I read The Transformative Power of Collaborative Inquiry: Realizing Change in Schools and Classrooms, by Donohoo and Velasco. What follows are my major takeaways from this book, which proved to elaborate on many of the questions I was left with after reading the Facilitator’s Guide. Promoting Participation

Factors for Success

Shifting Perceptions

Donahoo, J. and Velasco, M. (2016). The Transformative Power of Collaborative Inquiry: Realizing Change in Schools and Classrooms, Thousand Oaks, CA: Corwin. As a college teacher, this has been a topic of debate since I began my teaching career about seven years ago. There have been many both for and against students utilizing technology in the classroom, be it smartphone or laptop.

Those for the use believe that students in this era are so accustomed to the technology, that in fact their very being comprises this technology, and thus does not distract. It is questioned that the alternative may be more true, that being without the smartphone and online access is more distracting and thus detrimental. A study published recently demonstrated that laptop use in a classroom not only can decrease performance of those utilizing them, but also of those students sitting near them in class. Interestingly, the decrease in performance is seen most dramatically in those students that typically perform above average. It is postulated this is perhaps due to the over-confidence of these high-achievers of their ability to multi-task. My own anecdotal evidence suggests the use of laptops interferes with their self-regulated learning habits. Those accustomed to taking traditional hand-written notes often re-write these after class, followed by further research into areas of interest or confusion, and adding this to their notes as well. Having word-processed notes perhaps eliminates the need to re-write them, and they're likely spending time in class researching further into areas of immediate interest and thus missing parts of the subsequent lecture. I know this topic falls slightly outside the topics of this course, however it was brought back up in a recent forum discussion, and has rekindled my interest. Has anyone else, particularly those teaching at the post-secondary level, had experience in classrooms that have allowed technology use? Currently my school supports the teacher's discretion to allow or disallow, so long as it doesn't preclude or single out those who require supportive accommodation. My practice is to leave it to personal choice of each student, after providing them with my own study habits and thoughts on note taking, along with my understanding of the potential distraction that laptops may cause them. This recent study also focuses on the distractive nature of technology to students sitting in proximity to those using laptops, so I may take the advice given and designate certain areas of the room to technology users. Thoughts? http://seii.mit.edu/wp-content/uploads/2016/05/SEII-Discussion-Paper-2016.02-Payne-Carter-Greenberg-and-Walker-2.pdf https://www.washingtonpost.com/news/wonk/wp/2016/05/16/why-smart-kids-shouldnt-use-laptops-in-class/?utm_term=.5c7490ef6214 http://www.cbc.ca/news/technology/laptops-classroom-digital-distraction-1.3949743 https://teachingcenter.wustl.edu/2015/08/laptop-use-effects-learning-attention/ https://web.stanford.edu/class/linguist156/laptops.pdf

|

AuthorI teach Paramedicine at Cambrian College, in Sudbury, Ontario. I also continue to work as a paramedic, and ride bikes. This is my third semester in the PME program, and I look forward to learning with everyone! Archives

March 2017

Categories |

||||||||||||||||||

RSS Feed

RSS Feed