|

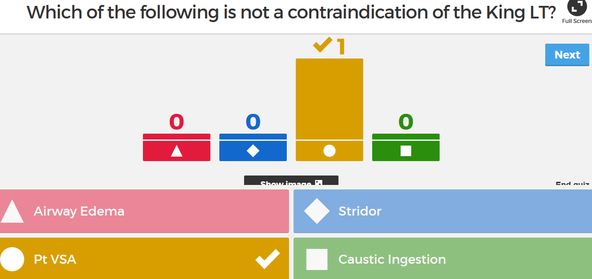

I decided to finally check out a tool that our college teaching development team has been raving about all year, Kahoot. As a staff, we had played a short ten question game of it during back to school meetings in the fall, but there was so much going on little attention was really given to it. First of all, part of its intrigue are the two words I used to describe my experience, play and game.

So what is Kahoot? I discovered it's a free online quiz program. So I just now made an account, and looked at the developing side of it. It's very intuitive, simple, and quick to use. You write a question, link video or images if you choose, and write your choices. When you're ready to play, on a central device from the developing site, select the quiz, the participants then google "kahoot on their smartphone, tablet, laptop etc., go to the Kahoot players website which immediately asks for a pin number, which is shown on the central screen, and then the quiz begins. You can select music, question timers, question scoring which also scores for speed, and displays a leader board. I'm not necessarily a fan of the latter, but the options are there. You can also select your questions to be randomized, as well as the answers, and statistics display the number of correct responses to each question. Essentially this is like an evolved TurningPoint. Reviewing this way is certainly more engaging than the traditional methods, and reduces student anxiety as they have the ability to answer anonymously if the points option is not selected. This could prove to be a good group assignment, having a small group begin each class reviewing previous material. The drawbacks are the 1,000 character question limit, as well as limited space for the answers, so it seems to be good for factual type questions only, and does not seem to be able to incorporate multiple multiples - a standard question type of paramedic testing. I often like to quiz with short answers to truly ascertain comprehension and recall, not just recognition, but this will certainly be an interactive tool that will add variety to my classroom. The developer site is found at: getkahoot.com The students would then visit: https://kahoot.it/#/ Check it out, super easy.

0 Comments

In replying to a philosophical article response, I came across the question of those reluctant to practice reflective teaching, and refusing to change even in the face of evidence of a better method.

It made me think of two resources I've used in PME 801 that I'm also currently taking. In the article depicted above (and linked below), DuFour describes the traditional teacher position of working in isolation. A teacher's professional practice was kept under a veil. The movement today is towards a collaborative model, but how do we encourage this? Donohoo and Velasco contend that collaboration should be voluntary to promote the best engagement from a sense of ownership, that mandating this would result in resistance. DuFour however argues the opposite. The time for polite encouraging is over, it is a professional and ethical responsibility of teachers to engage in collaboration, ever seeking methods to better themselves for the betterment of their students. Indeed, Donohoo and Velasco cite many sources that state student benefit gains is quadrupled when teachers collaborate, and use evidence from this to adapt their practice. In this regard, DuFour continues his argument, stating that evidence trumps precedent, again following that such change is the moral and ethical duty of the teacher. Of course there will be growing pain as collaboration continues to develop, but that's a different problem, one that will be a happy result as it means collaboration is at least beginning. So I pose the question, should collaboration be considered a voluntary endeavor as Donohoo and Velasco believe, and as is endorse by Learning Forward Ontario, or is it time to take a stronger stand with DuFour and demand change? http://www.jstor.org.proxy.queensu.ca/stable/pdf/27922512.pdf Donahoo, J. and Velasco, M. (2016). The Transformative Power of Collaborative Inquiry: Realizing Change in Schools and Classrooms, Thousand Oaks, CA: Corwin. In my department's recent PD meeting, we were given a quick introduction to the term lateral violence. This was initially just part of the introduction of a new Human Resources employee, whose past work focused on working in US hospitals to identify and decrease this. However, what began as an introduction quickly became thirty minutes of meeting digression.

Essentially, lateral violence is all the traditional indirect and sometimes direct aggression or violence directed towards one's peers. Examples include non-verbal innuendo (eyebrow raising), undermining activities, backstabbing, broken confidences, etc. There are only three full-time faculty in my program, and about eight part-time. We all work very closely, and the three of us full-time faculty share office space, and collaborate and work together in detail every single day. Another program within our department that is much larger immediately began to show obvious disdain between smaller groups of their whole. Even as we discussed these concepts, the display of lateral violence within the room was immediate and obvious. It was hard, and sadly entertaining, to see such behavior within my colleagues and between peers that were supposed to be on the same team. The majority of them agreed that we should as an entire school engage in lateral violence training, which would include we carry pocket cards with pre-written replies whenever encountered with such acts. I chose to bring this up, because it seems to relate to what I've been reading elsewhere in this PME 811 course, regarding noticing behavior and working to change it. A large part of that however, is noticing behavior you wish to change for the betterment of others, and perhaps those involved here don't wish to? It sounds like it has become to an extent that it is affecting teacher attendance and life outside of the workplace. Perhaps this group needs to identify a project to work on with a skilled facilitator as suggested be Bradley Ermeling and LearningForward. Perhaps this behavior requires what Noddings termed confirmation, where instead of criticizing their behavior, they are pointed towards a better course of action - similar to the lateral violence approach but with less officialdom. Has anyone else been introduced to this type of terminology or training? Perhaps I am naive to think that as professionals we should be able to put aside differences and work together, after all we should be modelling the behavior we aspire of our students. For more information on Lateral Violence: https://www.arnnl.ca/sites/default/files/Civility%20and%20Nursing%20Practice.pdf Noddings, N. (2010). Moral education in an age of globalization. Educational Philosophy and Theory, 42(4), 390–396. doi: 10.1111/j.1469-5812.2008.00487.x https://learningforward.org/publications/learning-principal/learning-principal-blog/learning-principal/2012/10/24/the-learning-principal-fall-2012-vol.-8-no.1 Innovative teaching methods vs the traditional university lecture

The above link is a BBC write-up comparing traditional lecture based classrooms and newer innovative styles. I found value in this short article as it painted a comparison with benefits to each side, not just an argument for innovation. It describes that although traditional lectures carry the connotation of being dull, this is more properly accredited to the teacher, not the method. We've all had lecturers we've enjoyed, and those that were monotone and dull. An enthusiastic teacher can bring the lecture hall alive, clarifying complex topics, and providing experience based teaching. It also describes how in a technology filled life, some students prefer this method away from devices. In the end, it is suggested that the traditional lecture should at least incorporate some degree of novelty and technology to invigorate the senses and improve student engagement. It suggests the use of videos, although I've been incorporating videos, demonstrations, small case studies with class involvement for years, and always considered this still to be traditional lecturing. Collaboration it is noted is the next step, and it also mentions classroom architecture as a roadblock. Very true. I am often assigned the classic amphitheater style lecture hall, with small swivel up desktops. Clearly this classroom did not have student collaboration in mind, and indeed makes me think I should work to avoid this room! Many other high-tech rooms have immovable desks with hardwired plug-ins on each, which although an innovative approach to encourage technology, does nothing to encourage collaboration - unless using technological platforms, but I would argue there is something to be said for in-person interaction out from behind a computer screen. The best group work I've done was teaching in a regular classroom with regular moveable desks, where instead of teaching pre-hospital documentation by lecture, student collaboration was used. The students were given sample paramedic calls, a documentation manual, and then taught themselves and each other in small groups, and then audited forms of another group with different call information to further their understanding. This reflection has made me consider requesting the traditional "low-key" classroom for more classes, where it will be easier to incorporate more diverse learning opportunities. Ideas are whirling... Thanks to the comments of Leah from my last post, I searched out (followed Leah's link) to a TedTalk by Martin Bush about different methods of evaluating multiple choice tests. I've requested the original journal article behind the premise of his talk, however the direct request can take a few days it seems, if even approved.

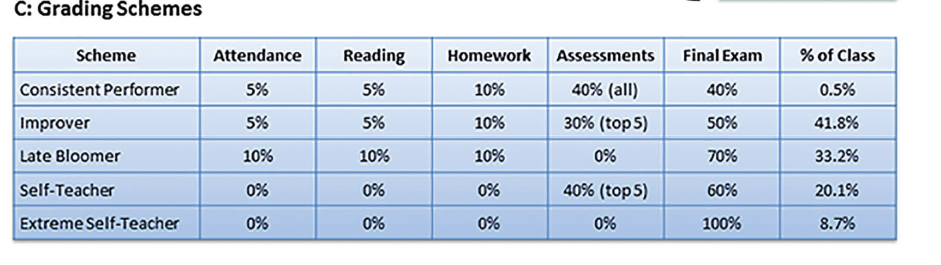

The TedTalk did a fantastic overview however. Essentially, you can use the same multiple choice test, but assign different values and schemes to the scoring. The concept here being to eliminate the guess-work, and improve the credibility of the test as an effective evaluation of knowledge. https://youtu.be/ACB2B2EdiXs Martin describes a few different methods I'll try to simplify here: Negative Marking: 3 marks for a correct response, -1 for incorrect a) wrong b) wrong X. Student would receive -1 for this question. c) correct d) wrong The theory here is that for guessing, there is now a 1/4 chance of scoring 3 marks, and 3/4 chanc of scoring -1, so these should equalize. This can however ultimately lower test averages, and nobody likes being graded in a negative environment. That said, my profession is very much like this, do well and save a life get 3 points, do poorly and not save the life -1. Subset Selection: You may select more than one answer. a) wrong b) wrong X -1 c) correct X +3 Student would receive 2 marks d) wrong a) wrong b) wrong X -1 Student would receive 1 mark c) correct X +3 d) wrong X -1 This marking scheme begins to demonstrate knowledge, particularly in the common case where the student is able to eliminate two options and is left with the classic 50:50, well now they may select both answers and achieve 2 marks. A concern I have here, how much time will students spend debating over what they're willing to risk on each question? Should I go for the 3 marks, or play it safe with 2? Will this increase test anxiety? Subset Selection Without Negative Marking: a) wrong b) wrong X c) correct X Student would receive 1/2 mark d) wrong a) wrong b) wrong X c) correct X Student would receive 1/3 mark d) wrong X a) wrong b) wrong c) correct X Student would receive 1 mark d) wrong a) wrong b) wrong c) correct Student would receive 1/4 mark d) wrong I find this to be a highly positive test, where one could answer nothing and still achieve 25%! This final method is still being studied to determine its effective quality, however the Subset Selection was presented to statistically match the profile of what a good test is meant to show, that increasing knowledge would yield increasing test scores. My initial thoughts are this would be easy to implement with current tests, however the grading would be significantly more time consuming. Last year my school switched from the "scan-tron" to "grade-cam", both utilize the bubble-sheets, however now we can mark instantly with a smartphone app or classroom camera. This technology would not accommodate this type of grading and the selection of multiple different answer patterns, which would mean marking 70 multiple choice questions for 30 students, where each answer must be interpreted, not just mindlessly checked to a defined answer key, and we don't have TA's! I have an opportunity to implement this in a few weeks with a smaller class size, if the other group teachers agree - a multiple choice test written in our smaller lab sections. This would also give me a great insight compared to traditional scores, and doing so in an easily manageable group of students. I need to spend some time playing with the scoring variability to determine if I prefer the Subset Selection or Subset without Negative Marking. I can appreciate how these methods were shown to lower test averages already just playing with some numbers, where students will likely achieve many half marks, but I do like this concept of working to eliminate some of the guesswork and better demonstrate their thought process of ruling out options. I wonder however, does not answering a question with the most correct incorrect option also display this? They didn't pick the right option, but they picked the next best one. I'd love to hear any thoughts or other variations people have tried with this, and if there are opinions both from a student or teacher perspective. If a multiple choice test is being utilized, is this a better option than the traditional scoring?  I’ve been trying to put some further reflection into my testing methods in the upper year pathophysiology class I teach. As I have mentioned briefly, the course has traditionally been tested using high-stakes multiple choice tests. These tests have been designed to follow the structure of the provincial certifying exam written after graduation. My program offers the highest ranking on this provincial test for the past 13 years, 100% pass rate, and no other school is close to boasting that. I understand however, that just because this is the way we’ve always done it, this does not mean that it is the right way. In my last post I looked at an alternative grading scheme, that could even continue to utilize the current tests, but that would perhaps give students the best opportunity to do well. On one hand it doesn’t penalize a single poor performance if one achieves well elsewhere, on the other hand, in the field of paramedicine failing to do well on a single call may have drastic consequences, and hence we’ve always been okay with high-stakes testing. I’ve also heard of a multiple-choice scheme where the test is given in class, which is worth two-thirds, and then the test is taken home until next class for the students to write again using their notes and resources, worth the remaining third, in an attempt to deepen learning. Reviewing a multiple choice guide from the University of Texas, it gives a few tips to creating multiple choice tests. It was interesting to see how many of the “do’s” I don’t do. Why? For the same reason I’ve brought up previously, the provincial certification exam is written in a confusing method with often poorly written questions, so as a program we take it upon ourselves as a duty to prepare students for this exam, which will then allow them to find gainful employment and a fulfilling career. After all, is this not why they came to college in the first place? Within the Texas document, it does discuss some positives of multiple choice testing, which I have found highly beneficial. Of these, namely the quick feedback, and the ability to statistically analyze the data. It does warn however that learning can be lessened if the test is written poorly, as students will study for recognition, not recall. Additionally, poorly written tests will allow test-wise students to find answers throughout the test, which I do on purpose to teach students how to better write tests. It suggests to avoid tricks, minimize reading, and ensure there is just one correct answer. I incorporate small degrees of all of these, again to teach students how to write such tests, as not only will their provincial exam be like this, but the majority of their hiring-competition written tests as well. It is as though they are often written by those trying to prove how smart they are, not ascertain the knowledge of the applicants. Since I took this course over now two years ago, I’ve taken the degree of multiple choice down to 70% of each test, with 30% short-answer, to further emphasize a deeper learning and engagement with the material. I’m brainstorming with ideas to incorporate more case-based learning and inquiry through assignments, to supplement the traditional exam style of the class. My thoughts are this may increase engagement, give the students more ownership of their learning, improving material contact, while still administering the now lower-stakes multiple choice tests to ensure post-graduation readiness. As I’m in the reflection / brainstorming phase, I would greatly appreciate any feedback and experience with those under the pressures of standardized tests, who have been influenced to teach to a test, and from those that successfully made the leap away from the traditional. https://facultyinnovate.utexas.edu/teaching/check-learning/question-types/multiple-choice Currently within my program and individual courses, there is literally so much material crammed in that we utilize every minute of our class time, and often more. This is in addition to having an overloaded program, where students carry above what is considered a full-time compliment of courses. To this end, we often discuss assessment. We’ve undergone a radical and effective evaluative change in our practical lab components, however our theory classes have remained very traditional. The biggest driving factor of this is the provincial certification exam post-graduation, which is a six hour comprehensive multiple choice exam, written to be confusing, to distract with non-essential material, with page long questions, and a focus on multiple-multiples. I don’t agree with the format of this exam, but that’s for a different post. SO, that’s why we haven’t yet ventured towards other assessment strategies, we feel our students need the practice of this style of exam and questions. As a college program, our job is to help students acquire the skills needed to obtain employment and build a successful career and healthy life through their chosen profession, and part of this is ensuring they will obtain certification. Today I was reading an article from Faculty Focus, and then from within the comments there was the following shared resource: http://www.lifescied.org/content/16/1/ar2 This article shed some light on this dilemma for me. A way to continue to give students the style of questions they need to practice in a legitimate setting, and a novel way of evaluating to improve overall performance as well as impart successful generalizable strategies. The premise is provide students with weekly cumulative exams, provide immediate feedback, and encouragement to improve upon metacognitive strategies. This demonstrated increased student success in higher order questions, even when question content had not been tested before. To take this a step further, the study also shows how a grading scheme was used that incorporated five different weights to each assessment, to allow for students to improve with time, decline with time, or maintain performance. Marks were input to a spreadsheet, and whatever method identified the highest mark, that was the score utilized for that student. It helps students by:

Be Aware Of:

This is certainly an idea I’m going to let fester a bit and discuss with my colleagues.We currently have classes with infrequent and high-stakes exams, is this truly necessary?The biggest concern I initially hold is the class time this would utilize.Could this be offset by increased readings / self and group work assignments?I think the biggest benefit is the increase is student metacognitive and self-regulating skills. Today I read a recent article on Faculty Focus, Assignment Helps Students Assess Their Progress by Christina Moore, where she describes a simple yet highly effective way to not only check on the progress of your students, but more importantly, for the students to check in on themselves.

Following this outline, I will set up a simple Moodle assignment for my class to do, which will not be graded, but will be a mandatory assignment, where the following course material will not be available until this is complete. I am going to ask the students to submit a journal style reflection of their progress in the course thus far, if it meets their expectations, what their goals are for the remainder of the course, and how they intend to meet those goals. The assignment will also involve the student stating their current grades and attendance. I've been looking for ways to continuously improve the self-regulated skills of my students, and I believe this will be an easy task to employ which will yield great results. Students will employ self-reflection, and make an honest self-evaluation statement about where they stand in the course, taking ownership for their performance and attendance. Many students will likely just need to continue their current habits going forward, some will need to form new strategies, but all will be reflecting at an opportune time in the semester. This will also allow me to gauge the overall feel of the class as a whole, and offer personalized feedback, opening up dialogue that some students may otherwise not have had. It will further promote feedback undoubtedly about the course itself and perhaps my own teaching practices and material, which will also give me an opportunity to reflect, adapt, and carry on. Win-win. |

AuthorI teach Paramedicine at Cambrian College, in Sudbury, Ontario. I also continue to work as a paramedic, and ride bikes. This is my third semester in the PME program, and I look forward to learning with everyone! Archives

March 2017

Categories |

RSS Feed

RSS Feed